In this article, we will see high level design of a video streaming application like YouTube, Netflix etc. Our design for a video streaming service will support the following features:

Functional Requirements

- User should be able to upload videos

- User should be able to view homepage

- User should be able to search videos based on titles.

- User should be able to stream/play videos

- Video should be supported on all devices

Non Functional Requirements

- System should be highly available

- Videos should have low latency(minimal buffering)

- System should be highly reliable and videos uploaded should not be lost.

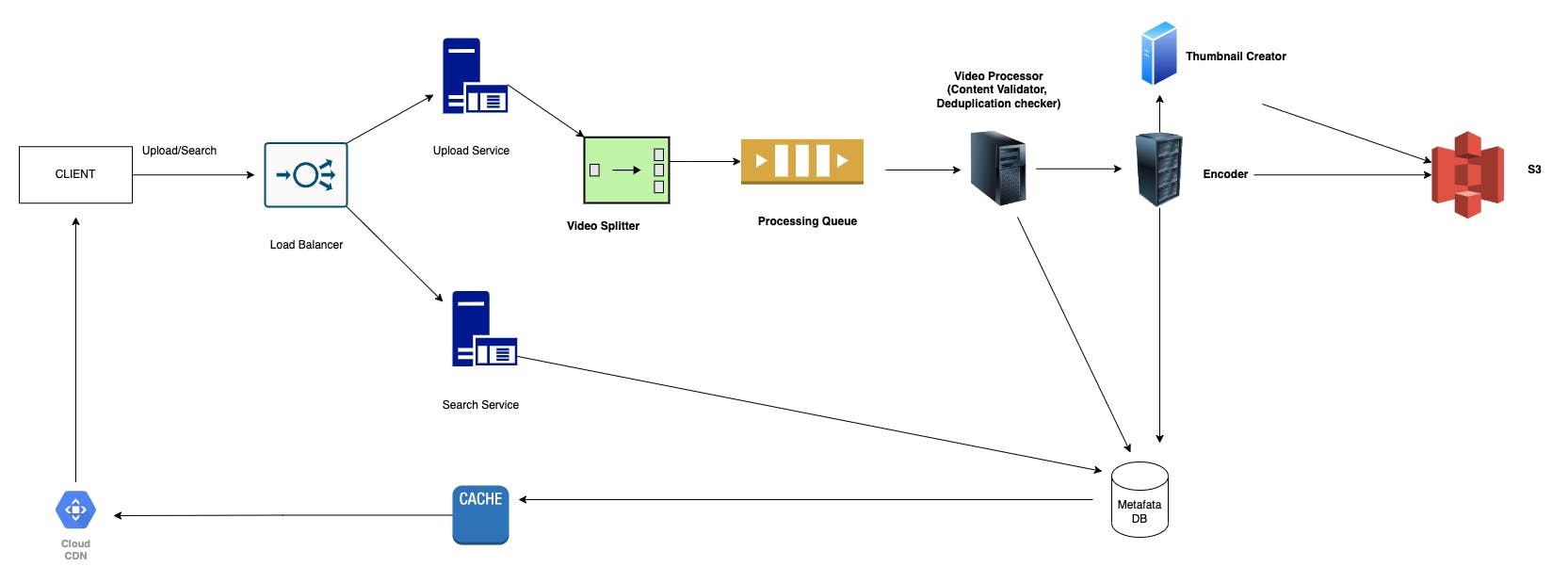

Key Components

File Chunker

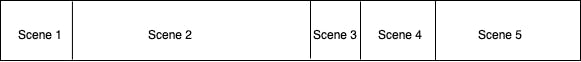

Instead of being stored as a single large file, each uploaded video can be stored in several chunks. This is necessary because an uploader can upload a large video. Processing or streaming a single heavy file can be time-consuming.

When the video is stored and available to the viewer in chunks, the user will not need to download the entire video before playing it. It will request the first chunk from the server, and while that chunk is playing, the client requests for the next chunk so there’s minimum lag between the chunks and the user can have a seamless experience while watching the video.

The file chunker/splitter does this task to divide the whole video into small breakable chunks.

Instead of dividing the chunks based on timestamp, we can divide the chunks on basis of scenes(collating shots to create scene) for better user experience, so that during network issues, the user is able to watch the whole scene and there is no buffering within scenes.

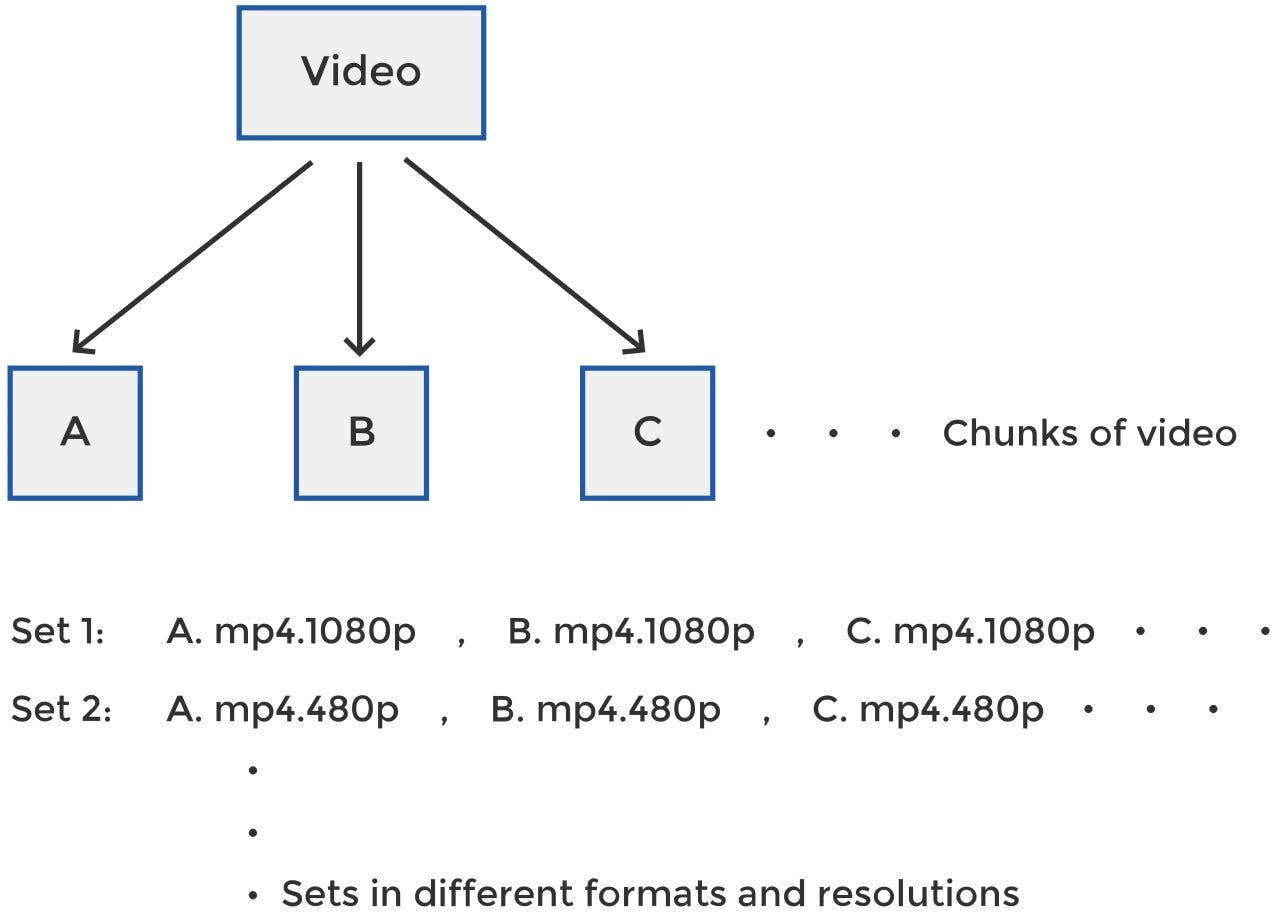

Encoder

To support the video in multiple devices we need to store the video file in multiple format eg: mp4, avi etc and also in multiple qualities/resolutions eg: 1020p, 720p etc.

If we have i number of formats to support and j number of resolutions, the system will generate i*j number of videos for each video uploaded to the platform. An entry for each of these sets of chunks will be made into the metadata database and a path will be provided for the distributed storage where the actual files are saved.

Based on device, user requirement and internal connectivity, we can render the required format and quality.

Video Processor

The video processor has the following tasks:

- Validate if the content uploaded is in compliance with the rules and does not have offensive contents

- Check for video deduplication and if previous chunks of video can be reused in case of deduplication

Thumbnail generator

Every video should have a thumbnail file which would be small files of around 1-5 KB each, created for the video, uniquely identifying a video in the homepage.

Video and thumbnail storage

The uploaded video and the thumbnail for the respective video should be uploaded to an object storage(distributed file system) like Amazon S3 or Hadoop data file system.

Video metadata storage

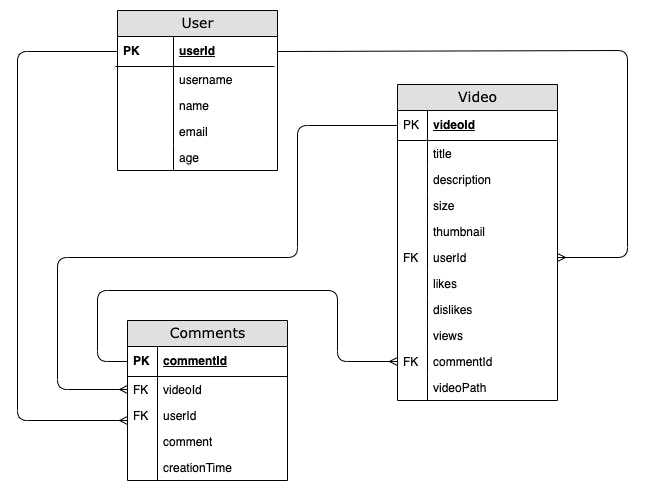

Video metadata like uploader details, view, likes, dislikes, comments etc need to be stored in a video metadata storage/DB.

We can use a SQL database as our metadata information would be quite structured. The database tables would look like the following:

Database sharding

Since we would be having huge volume of data, using a single database server would not be a good idea from scalability point of view. Due to which , we would be sharding out data based on userId/videoId. Discussing more on the approches :

Sharding on UserId : Sharding based on userId would not be a very good option, as it might be possible that the number of videos uploaded by the user varies and if a user is very popular and uploads many videos, then that would lead to non uniform distribution of data on our server affecting the overall performance.

Sharding on VideoId : Sharding based on videoId might solve the issue of non uniform data distribution, but while searching for videos based on the input query, we need to collate the data from multiple servers, for which we need to have a centralized aggregator server which aggregates and ranks the results before returning them to the user.

Data deduplication

Since our system would be read heavy, we should be focusing on handling read traffic. For the same, we should be having multiple copies of each video so that we can distribute the read traffic efficiently within different servers. We can use master-slave architecture, to deal with the heavy read on our video metadata and allow writes only on the master servers which would sync the data to the slave servers asynchronously during off-peak hours. One important point to note here, that during the X time when the slave servers have not been updated, we would be getting stale data for each read call on the slave servers which should be acceptable as the X duration would be very small and we would be decreasing significant load on our system by not keeping slave servers consistent throughout.

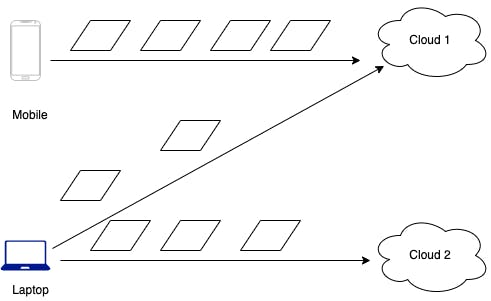

Processing Queue

Since there would be multiple incoming requests, we would be submitting the tasks to a processing queue and our system would be picking up the tasks from the queue for parallel processing based on thread availability. The workers (or the encoders) pick up the tasks from the processing queue, encode them into different formats and store them in the distributed file storage.

Caching

Going with the 80-20 rule, if 20% of daily read volume for videos is generating 80% traffic, implying that certain videos are so popular that majority of people view them and leading to 80% of our traffic then caching these 20% videos and metadata would be smart way to reduce our recomputation and make our system efficient.

CDN

A CDN, content delivery network or a system of distributed servers and their data centers, cache web content serving them quickly to the users by decreasing the physical distance between the user and the content, based on geographical location of user. It maximizes user performance by having videos at multiple places and making sure the videos are geographically closer to the user.

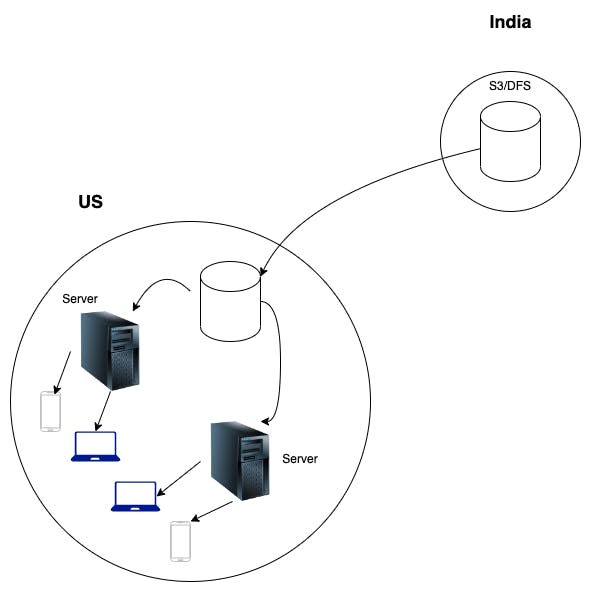

So once the video is processed (split and encoded) and ready for viewing, it is stored in Amazon S3. It is also pushed to all the CDN in the world. Instead of directing all the traffic through this expensive route, system copies the video file from India-based storage to a storage location in US once during off-peak hours. Once the video has reached the region, it’s copied to all the CDN servers present in the ISP networks. When the viewer presses the play button, the video is streamed from the nearest CDN installed at the local ISP and displayed on the viewer’s device.

Some less popular videos not cached in our content delivery network can be served by the servers in various data centers.

System Design

Conclusion

This is how Netflix, YouTube or similar video streaming applications onboard videos, keep track of them and display them to millions of users.

Thank you on making it to the end! Feel free to comment, follow for updates!